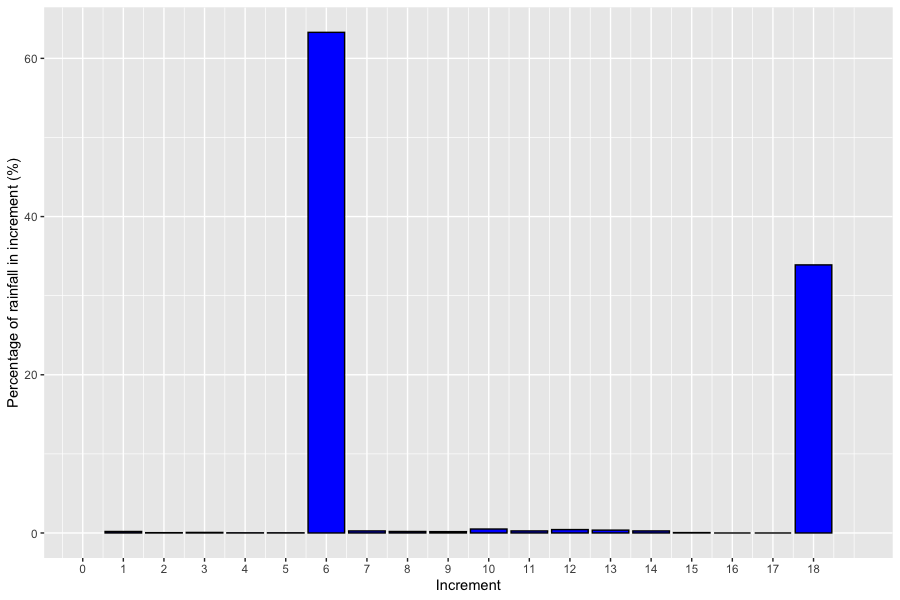

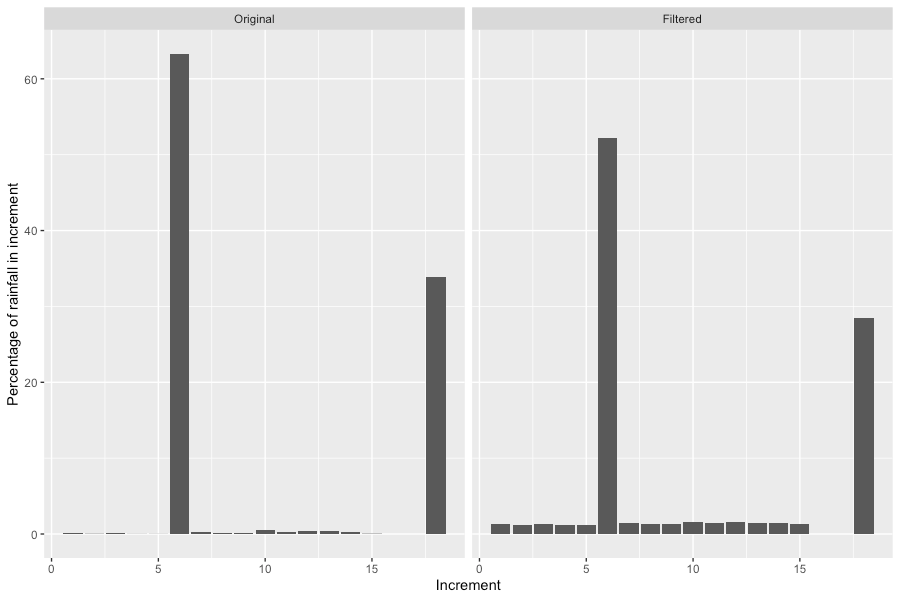

I’ve written before about issues with a small number of areal temporal patterns available from the data hub, where there appear to be gross errors. An example is areal pattern 5644, Murray-Darling Basin, 36 hour, 500 km2 area, 18 x 2 hour increments (Figure 1).

Most rainfall (63.6 %), occurs in the 6th increment (between 10 and 12 hours) and 33.9 % occurs in the final increment (between 34 and 36 hours). There is 24 hours between the two large rainfalls which suggests an error in the underlying data were a daily rainfall total has been mistaken for a rainfall over a much shorter duration.

So what effect does this have on modelled flood hydrographs? It turns out this is highly dependent on the continuing loss.

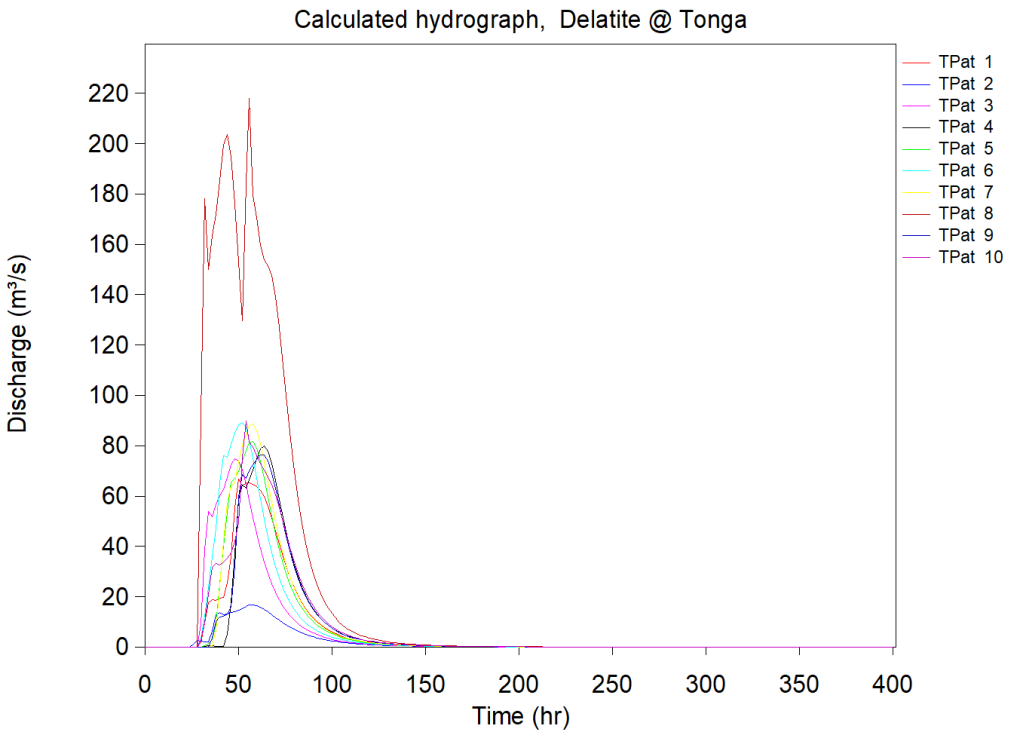

A ensemble run of RORB model of the Delatite River at Tonga Bridge (1% AEP, 36 hour duration) is shown in Figure 2. The losses were taken from the data hub: initial loss = 27 mm, continuing loss = 4.3 mm/h. TPat 8, which has ID 5644, peaks at about 220 cumec, which is 130 cumec more than the next largest hydrograph.

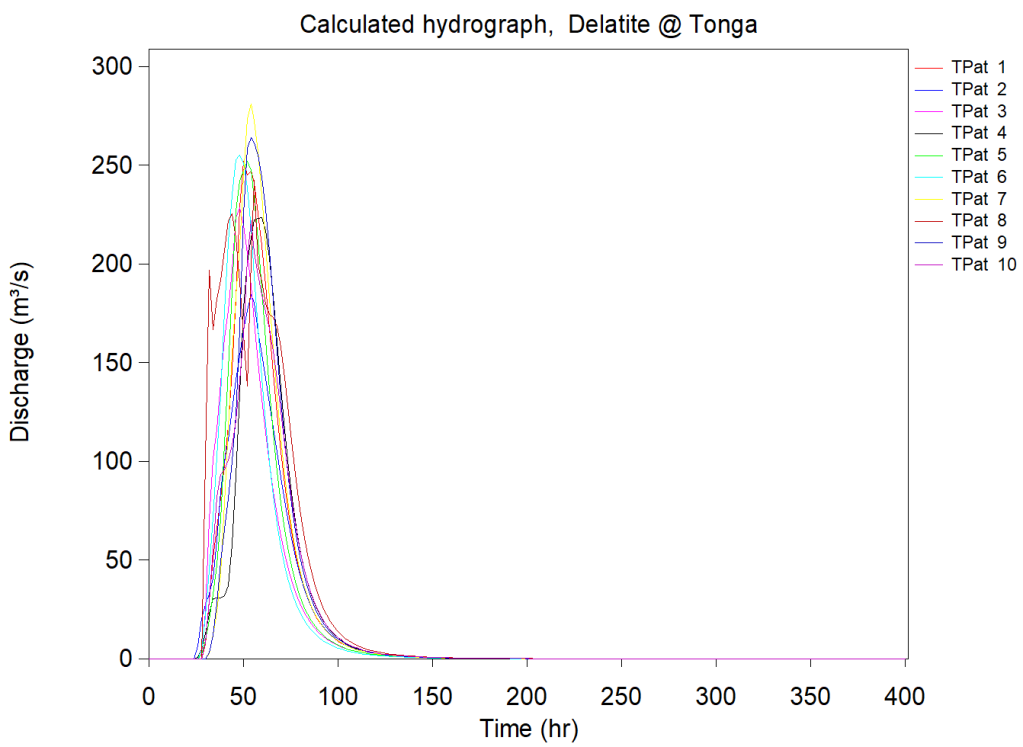

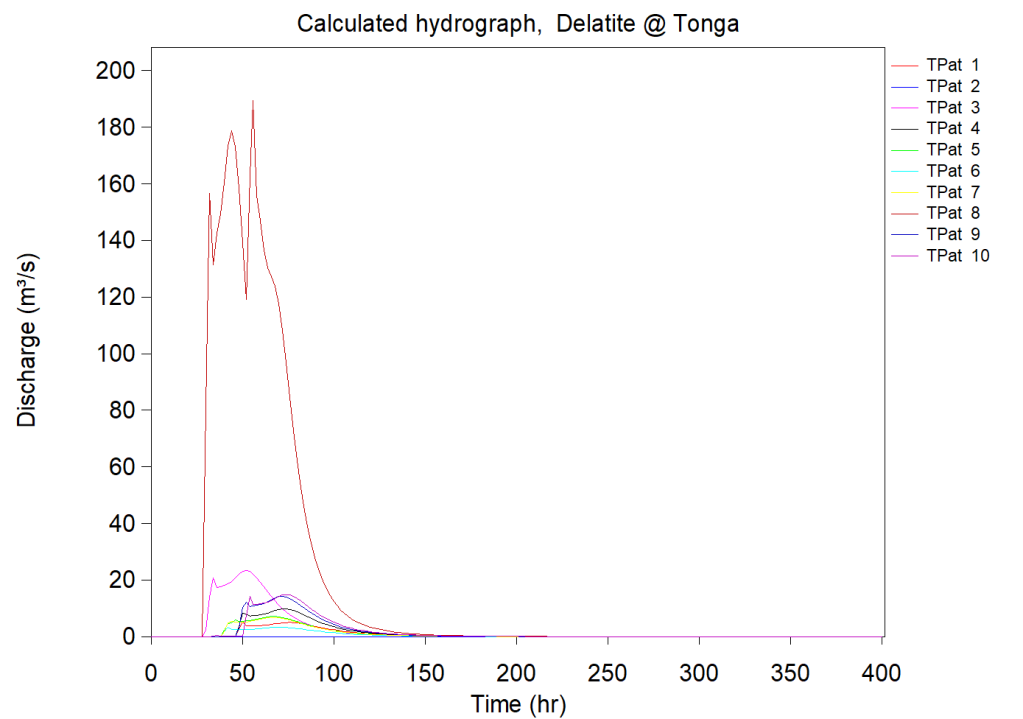

If the CL is reduced to 2 mm/h, TPat 8 no longer dominates (Figure 3). Increasing CL to 7 mm/h means that TPat 8 completely dominates (Figure 3).

Embedded burst filtering was turned on for these runs, but the effect of filtering on pattern 5644 was limited (Figure 5). The largest rainfall percentages have been reduced and spread out but two increments still dominate the pattern and the hydrologic response, at least when continuing loss is low.

The upshots are:

- Undertaking an ensemble run with high continuing loss may highlight temporal patterns that require further checking.

- Turning on embedded burst filtering does not solve the issues associated with temporal patterns that contain gross errors.

- Hydrologic modellers should considering removing erroneous patterns before undertaken model runs. There is a list of 19 patterns that appear to contain errors here.

Hi Tony, thanks for the reminder on this. It would seem like a relative minor job to replace the 19 temporal patterns you have identified with other representative temporal patterns. I’d presume most providers have probably done this themselves by changing the increment files and either copying one of the other 9 temporal patterns or creating an average one to replace the bad one. I wonder if we could just agree to adopt something for now until the next update?

Do you know what the process is for getting these minor updates to ARR done? I wonder if in future ARR updates, we should set aside a portion of funding for “maintenance” work.

Thanks Ben. Regarding the procedure for suggesting changes to ARR, looking at the ARR website, the contact email is hazards@ga.gov.au. I’ve forwarded a link to this blog and suggested the 19 problem patterns be replaced.

Turns out GA has no responsibility for the data hub. All enquires should be sent to admin@arr-software.org. I’ve sent an email noting the need to replace the 19 problem patterns.

Thank you for highlighting the issues, Tony. That would be great if someone could look at the problem and replace the rogue patterns. However, I doubt this will happen soon. Hence for the time being, as a engineer, I reckon that I will simply remove those rogue patterns from the list of storms to run, note and cite this discussion in the technical report!